User:Michiexile/MATH198/Lecture 4

Product

Recall the construction of a cartesian product of two sets: . We have functions and extracting the two sets from the product, and we can take any two functions and and take them together to form a function .

Similarly, we can form the type of pairs of Haskell types: Pair s t = (s,t). For the pair type, we have canonical functions fst :: (s,t) -> s and snd :: (s,t) -> t extracting the components. And given two functions f :: s -> s' and g :: t -> t', there is a function f *** g :: (s,t) -> (s',t').

An element of the pair is completely determined by the two elements included in it. Hence, if we have a pair of generalized elements and , we can find a unique generalized element such that the projection arrows on this gives us the original elements back.

This argument indicates to us a possible definition that avoids talking about elements in sets in the first place, and we are lead to the

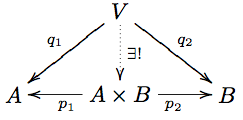

Definition A product of two objects in a category is an object equipped with arrows such that for any other object with arrows , there is a unique arrow such that the diagram

commutes. The diagram is called a product cone if it is a diagram of a product with the projection arrows from its definition.

In the category of sets, the unique map is given by . In the Haskell category, it is given by the combinator (&&&) :: (a -> b) -> (a -> c) -> a -> (b,c).

We tend to talk about the product. The justification for this lies in the first interesting

Proposition If and are both products for , then they are isomorphic.

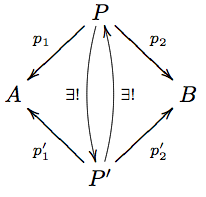

Proof Consider the diagram

Both vertical arrows are given by the product property of the two product cones involved. Their compositions are endo-arrows of , such that in each case, we get a diagram like

with (or ), and . There is, by the product property, only one endoarrow that can make the diagram work - but both the composition of the two arrows, and the identity arrow itself, make the diagram commute. Therefore, the composition has to be the identity. QED.

We can expand the binary product to higher order products easily - instead of pairs of arrows, we have families of arrows, and all the diagrams carry over to the larger case.

Binary functions

Functions into a product help define the product in the first place, and function as elements of the product. Functions from a product, on the other hand, allow us to put a formalism around the idea of functions of several variables.

So a function of two variables, of types A and B is a function f :: (A,B) -> C. The Haskell idiom for the same thing, A -> B -> C as a function taking one argument and returning a function of a single variable; as well as the curry/uncurry procedure is tightly connected to this viewpoint, and will reemerge below, as well as when we talk about adjunctions later on.

Coproduct

The product came, in part, out of considering the pair construction. One alternative way to write the Pair a b type is:

data Pair a b = Pair a b

and the resulting type is isomorphic, in Hask, to the product type we discussed above.

This is one of two basic things we can do in a data type declaration, and corresponds to the record types in Computer Science jargon.

The other thing we can do is to form a union type, by something like

data Union a b = Left a | Right b

which takes on either a value of type a or of type b, depending on what constructor we use.

This type guarantees the existence of two functions

Left :: a -> Union a b

Right :: b -> Union a b

Similarly, in the category of sets we have the disjoint union , which also comes with functions .

We can use all this to mimic the product definition. The directions of the inclusions indicate that we may well want the dualization of the definition. Thus we define:

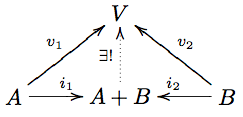

Definition A coproduct of objects in a category is an object equipped with arrows such that for any other object with arrows , there is a unique arrow such that the diagram

commutes. The diagram is called a coproduct cocone, and the arrows are inclusion arrows.

For sets, we need to insist that instead of just any and , we need the specific construction taking pairs for the coproduct to work out well. The issue here is that the categorical product is not defined as one single construction, but rather from how it behaves with respect to the arrows involved.

With this caveat, however, the coproduct in Set really is the disjoint union sketched above.

For Hask, the coproduct is the type construction of Union above - more usually written Either a b.

And following closely in the dualization of the things we did for products, there is a first

Proposition If are both coproducts for some pair in a category , then they are isomorphic.

The proof follows the exact pattern of the corresponding proposition for products.

Algebra of datatypes

Recall from Lecture 3 that we can consider endofunctors as container datatypes. Some of the more obvious such container datatypes include:

data 1 a = Empty

data T a = T a

These being the data type that has only one single element and the data type that has exactly one value contained.

Using these, we can generate a whole slew of further datatypes. First off, we can generate a data type with any finite number of elements by ( times). Remember that the coproduct construction for data types allows us to know which summand of the coproduct a given part is in, so the single elements in all the 1s in the definition of n here are all distinguishable, thus giving the final type the required number of elements.

Of note among these is the data type Bool = 2 - the Boolean data type, characterized by having exactly two elements.

Furthermore, we can note that , with the isomorphism given by the maps

f (Empty, T x) = T x

g (T x) = (Empty, T x)

Thus we have the capacity to add and multiply types with each other. We can verify, for any types

We can thus make sense of types like (either a triple of single values, or one out of two tagged pairs of single values).

This allows us to start working out a calculus of data types with versatile expression power. We can produce recursive data type definitions by using equations to define data types, that then allow a direct translation back into Haskell data type definitions, such as:

The real power of this way of rewriting types comes in the recognition that we can use algebraic methods to reason about our data types. For instance:

List = 1 + T * List

= 1 + T * (1 + T * List)

= 1 + T * 1 + T * T* List

= 1 + T + T * T * List

so a list is either empty, contains one element, or contains at least two elements. Using, though, ideas from the theory of power series, or from continued fractions, we can start analyzing the data types using steps on the way that seem completely bizarre, but arriving at important property. Again, an easy example for illustration:

List = 1 + T * List -- and thus

List - T * List = 1 -- even though (-) doesn't make sense for data types

(1 - T) * List = 1 -- still ignoring that (-)...

List = 1 / (1 - T) -- even though (/) doesn't make sense for data types

= 1 + T + T*T + T*T*T + ... -- by the geometric series identity

and hence, we can conclude - using formally algebraic steps in between - that a list by the given definition consists of either an empty list, a single value, a pair of values, three values, et.c.

At this point, I'd recommend anyone interested in more perspectives on this approach to data types, and thinks one may do with them, to read the following references:

Blog posts and Wikipages

The ideas in this last section originate in a sequence of research papers from Conor McBride - however, these are research papers in logic, and thus come with all the quirks such research papers usually carry. Instead, the ideas have been described in several places by various blog authors from the Haskell community - which make for a more accessible but much less strict read.

- http://en.wikibooks.org/wiki/Haskell/Zippers -- On zippers, and differentiating types

- http://blog.lab49.com/archives/3011 -- On the polynomial data type calculus

- http://blog.lab49.com/archives/3027 -- On differentiating types and zippers

- http://comonad.com/reader/2008/generatingfunctorology/ -- Different recursive type constructions

- http://strictlypositive.org/slicing-jpgs/ -- Lecture slides for similar themes.

- http://blog.sigfpe.com/2009/09/finite-differences-of-types.html -- Finite differences of types - generalizing the differentiation approach.

- http://homepage.mac.com/sigfpe/Computing/fold.html -- Develops the underlying theory for our algebra of datatypes in some detail.

Homework

Complete points for this homework consists of 4 out of 5 exercises. Partial credit is given.

- What are the products in the category of a poset ? What are the coproducts?

- Prove that any two coproducts are isomorphic.

- Prove that any two exponentials are isomorphic.

- Write down the type declaration for at least two of the example data types from the section of the algebra of datatypes, and write a

Functorimplementation for each. - * Read up on Zippers and on differentiating data structures. Find the derivative of List, as defined above. Prove that . Find the derivatives of BinaryTree, and of GenericTree.