Difference between revisions of "Typeclassopedia"

Geheimdienst (talk | contribs) (Updates to section Pointed. Turns out the class doesn't live in category-extras anymore :-/) |

Geheimdienst (talk | contribs) (→Pointed: Updated citation, the ACM pages are unfortunately useless) |

||

| Line 171: | Line 171: | ||

{{note|... modulo ⊥, <code>seq</code>, and assuming a lawful <code>Functor</code> instance.}} |

{{note|... modulo ⊥, <code>seq</code>, and assuming a lawful <code>Functor</code> instance.}} |

||

| − | However, you need not worry about it: this law is actually a so-called “free theorem” guaranteed by parametricity (see Wadler's [http:// |

+ | However, you need not worry about it: this law is actually a so-called “free theorem” guaranteed by parametricity (see Wadler's [http://homepages.inf.ed.ac.uk/wadler/topics/parametricity.html#free Theorems for free!]); it's impossible to write an instance of <code>Pointed</code> which does not satisfy it {{noteref}}. |

=Applicative= |

=Applicative= |

||

Revision as of 14:47, 16 November 2011

- By Brent Yorgey, byorgey@cis.upenn.edu

- As published 12 March 2009, issue 13 of the Monad.Reader, with tiny November 2011 updates by Geheimdienst

- Alternate formats: PDF / tex source / bibliography

The standard Haskell libraries feature a number of type classes with algebraic or category-theoretic underpinnings. Becoming a fluent Haskell hacker requires intimate familiarity with them all, yet acquiring this familiarity often involves combing through a mountain of tutorials, blog posts, mailing list archives, and IRC logs.

The goal of this article is to serve as a starting point for the student of Haskell wishing to gain a firm grasp of its standard type classes. The essentials of each type class are introduced, with examples, commentary, and extensive references for further reading.

Introduction

Have you ever had any of the following thoughts?

- What the heck is a monoid, and how is it different from a monad?

- I finally figured out how to use Parsec with do-notation, and someone told me I should use something called

Applicativeinstead. Um, what?

- Someone in the #haskell IRC channel used

(***), and when I asked lambdabot to tell me its type, it printed out scary gobbledygook that didn't even fit on one line! Then someone usedfmap fmap fmapand my brain exploded.

- When I asked how to do something I thought was really complicated, people started typing things like

zip.ap fmap.(id &&& wtf)and the scary thing is that they worked! Anyway, I think those people must actually be robots because there's no way anyone could come up with that in two seconds off the top of their head.

If you have, look no further! You, too, can write and understand concise, elegant, idiomatic Haskell code with the best of them.

There are two keys to an expert Haskell hacker's wisdom:

- Understand the types.

- Gain a deep intuition for each type class and its relationship to other type classes, backed up by familiarity with many examples.

It's impossible to overstate the importance of the first; the patient student of type signatures will uncover many profound secrets. Conversely, anyone ignorant of the types in their code is doomed to eternal uncertainty. “Hmm, it doesn't compile ... maybe I'll stick in an

fmap here ... nope, let's see ... maybe I need another (.) somewhere? ... um ...”

The second key—gaining deep intuition, backed by examples—is also important, but much more difficult to attain. A primary goal of this article is to set you on the road to gaining such intuition. However—

- There is no royal road to Haskell. —Euclid

∗ See Brent Yorgey's Abstraction, intuition, and the “monad tutorial fallacy” This article can only be a starting point, since good intuition comes from hard work, not from learning the right metaphor ∗. Anyone who reads and understands all of it will still have an arduous journey ahead—but sometimes a good starting point makes a big difference.

It should be noted that this is not a Haskell tutorial; it is assumed that the reader is already familiar with the basics of Haskell, including the standard Prelude, the type system, data types, and type classes.

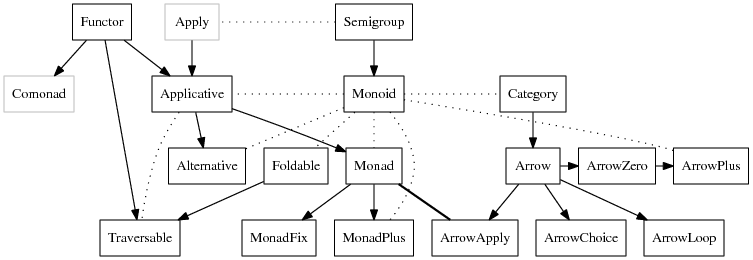

The type classes we will be discussing and their interrelationships:

∗ When Typeclassopedia was originally written, Pointed and Comonad were in the category-extras library. It has since been deprecated and they have moved to the pointed package and the comonad package. —Geheimdienst, Nov 2011

- Solid arrows point from the general to the specific; that is, if there is an arrow from Foo to Bar it means that every Bar is (or should be, or can be made into) a Foo.

- Dotted arrows indicate some other sort of relationship.

MonadandArrowApplyare equivalent.PointedandComonadare greyed out since they are not actually (yet) in the standard Haskell libraries ∗.

One more note before we begin. I've seen “type class” written as one word, “typeclass,” but let's settle this once and for all: the correct spelling uses two words (the title of this article notwithstanding), as evidenced by, for example, the Haskell 98 Revised Report, early papers on type classes like Type classes in Haskell and Type classes: exploring the design space, and Hudak et al.'s history of Haskell.

We now begin with the simplest type class of all: Functor.

Functor

The Functor class (haddock) is the most basic and ubiquitous type class in the Haskell libraries. A simple intuition is that a Functor represents a “container” of some sort, along with the ability to apply a function uniformly to every element in the container. For example, a list is a container of elements, and we can apply a function to every element of a list using map. A binary tree is also a container of elements, and it's not hard to come up with a way to recursively apply a function to every element in a tree.

Another intuition is that a Functor represents some sort of “computational context.” This intuition is generally more useful, but is more difficult to explain, precisely because it is so general. Some examples later should help to clarify the Functor-as-context point of view.

In the end, however, a Functor is simply what it is defined to be; doubtless there are many examples of Functor instances that don't exactly fit either of the above intuitions. The wise student will focus their attention on definitions and examples, without leaning too heavily on any particular metaphor. Intuition will come, in time, on its own.

Definition

The type class declaration for Functor:

class Functor f where

fmap :: (a -> b) -> f a -> f b

Functor is exported by the Prelude, so no special imports are needed to use it.

First, the f a and f b in the type signature for fmap tell us that f isn't just a type; it is a type constructor which takes another type as a parameter. (A more precise way to say this is that the kind of f must be * -> *.) For example, Maybe is such a type constructor: Maybe is not a type in and of itself, but requires another type as a parameter, like Maybe Integer. So it would not make sense to say instance Functor Integer, but it could make sense to say instance Functor Maybe.

Now look at the type of fmap: it takes any function from a to b, and a value of type f a, and outputs a value of type f b. From the container point of view, the intention is that fmap applies a function to each element of a container, without altering the structure of the container. From the context point of view, the intention is that fmap applies a function to a value without altering its context. Let's look at a few specific examples.

Instances

∗ Recall that [] has two meanings in Haskell: it can either stand for the empty list, or, as here, it can represent the list type constructor (pronounced “list-of”). In other words, the type [a] (list-of-a) can also be written ([] a).

∗ You might ask why we need a separate map function. Why not just do away with the current list-only map function, and rename fmap to map instead? Well, that's a good question. The usual argument is that someone just learning Haskell, when using map incorrectly, would much rather see an error about lists than about Functors.

As noted before, the list constructor [] is a functor ∗; we can use the standard list function map to apply a function to each element of a list ∗. The Maybe type constructor is also a functor, representing a container which might hold a single element. The function fmap g has no effect on Nothing (there are no elements to which g can be applied), and simply applies g to the single element inside a Just. Alternatively, under the context interpretation, the list functor represents a context of nondeterministic choice; that is, a list can be thought of as representing a single value which is nondeterministically chosen from among several possibilities (the elements of the list). Likewise, the Maybe functor represents a context with possible failure. These instances are:

instance Functor [] where

fmap _ [] = []

fmap g (x:xs) = g x : fmap g xs

-- or we could just say fmap = map

instance Functor Maybe where

fmap _ Nothing = Nothing

fmap g (Just a) = Just (g a)

As an aside, in idiomatic Haskell code you will often see the letter f used to stand for both an arbitrary Functor and an arbitrary function. In this tutorial, I will use f only to represent Functors, and g or h to represent functions, but you should be aware of the potential confusion. In practice, what f stands for should always be clear from the context, by noting whether it is part of a type or part of the code.

There are other Functor instances in the standard libraries; below are a few. Note that some of these instances are not exported by the Prelude; to access them, you can import Control.Monad.Instances.

Either eis an instance ofFunctor;Either e arepresents a container which can contain either a value of typea, or a value of typee(often representing some sort of error condition). It is similar toMaybein that it represents possible failure, but it can carry some extra information about the failure as well.

((,) e)represents a container which holds an “annotation” of typeealong with the actual value it holds.

((->) e), the type of functions which take a value of typeeas a parameter, is aFunctor. It would be clearer to write it as(e ->), by analogy with an operator section like(1 +), but that syntax is not allowed. However, you can certainly think of it as(e ->). As a container,(e -> a)represents a (possibly infinite) set of values ofa, indexed by values ofe. Alternatively, and more usefully,(e ->)can be thought of as a context in which a value of typeeis available to be consulted in a read-only fashion. This is also why((->) e)is sometimes referred to as the reader monad; more on this later.

IOis aFunctor; a value of typeIO arepresents a computation producing a value of typeawhich may have I/O effects. Ifmcomputes the valuexwhile producing some I/O effects, thenfmap g mwill compute the valueg xwhile producing the same I/O effects.

- Many standard types from the containers library (such as

Tree,Map,Sequence, andStream) are instances ofFunctor. A notable exception isSet, which cannot be made aFunctorin Haskell (although it is certainly a mathematical functor) since it requires anOrdconstraint on its elements;fmapmust be applicable to any typesaandb.

A good exercise is to implement Functor instances for Either e, ((,) e), and ((->) e).

Laws

As far as the Haskell language itself is concerned, the only requirement to be a Functor is an implementation of fmap with the proper type. Any sensible Functor instance, however, will also satisfy the functor laws, which are part of the definition of a mathematical functor. There are two:

fmap id = id

fmap (g . h) = (fmap g) . (fmap h)

∗ Technically, these laws make f and fmap together an endofunctor on Hask, the category of Haskell types (ignoring ⊥, which is a party pooper). See Wikibook: Category theory.

Together, these laws ensure that fmap g does not change the structure of a container, only the elements. Equivalently, and more simply, they ensure that fmap g changes a value without altering its context ∗.

The first law says that mapping the identity function over every item in a container has no effect. The second says that mapping a composition of two functions over every item in a container is the same as first mapping one function, and then mapping the other.

As an example, the following code is a “valid” instance of Functor (it typechecks), but it violates the functor laws. Do you see why?

-- Evil Functor instance

instance Functor [] where

fmap _ [] = []

fmap g (x:xs) = g x : g x : fmap g xs

Any Haskeller worth their salt would reject this code as a gruesome abomination.

Intuition

There are two fundamental ways to think about fmap. The first has already been touched on: it takes two parameters, a function and a container, and applies the function “inside” the container, producing a new container. Alternately, we can think of fmap as applying a function to a value in a context (without altering the context).

Just like all other Haskell functions of “more than one parameter,” however, fmap is actually curried: it does not really take two parameters, but takes a single parameter and returns a function. For emphasis, we can write fmap's type with extra parentheses: fmap :: (a -> b) -> (f a -> f b). Written in this form, it is apparent that fmap transforms a “normal” function (g :: a -> b) into one which operates over containers/contexts (fmap g :: f a -> f b). This transformation is often referred to as a lift; fmap “lifts” a function from the “normal world” into the “f world.”

Further reading

A good starting point for reading about the category theory behind the concept of a functor is the excellent Haskell wikibook page on category theory.

Pointed

∗ The Pointed type class lives in the pointed library, moved from the category-extras library. The point function was originally named pure. —Geheimdienst, Nov 2011

The Pointed type class represents pointed functors. It is not actually a type class in the standard libraries ∗. But it could be, and it's useful in understanding a few other type classes, notably Applicative and Monad, so let's pretend for a minute.

Given a Functor, the Pointed class represents the additional ability to put a value into a “default context.” Often, this corresponds to creating a container with exactly one element, but it is more general than that. The type class declaration for Pointed is:

class Functor f => Pointed f where

point :: a -> f a -- aka pure, singleton, return, unit

Most of the standard Functor instances could also be instances of Pointed—for example, the Maybe instance of Pointed is point = Just; there are many possible implementations for lists, the most natural of which is point x = [x]; for ((->) e) it is ... well, I'll let you work it out. (Just follow the types!)

One example of a Functor which is not Pointed is ((,) e). If you try implementing point :: a -> (e,a) you will quickly see why: since the type e is completely arbitrary, there is no way to generate a value of type e out of thin air! However, as we will see, ((,) e) can be made Pointed if we place an additional restriction on e which allows us to generate a default value of type e (the most common solution is to make e an instance of Monoid).

∗ For those interested in category theory, this law states precisely that point is a natural transformation from the identity functor to f. The Pointed class has only one law ∗:

fmap g . point = point . g

∗ ... modulo ⊥, seq, and assuming a lawful Functor instance.

However, you need not worry about it: this law is actually a so-called “free theorem” guaranteed by parametricity (see Wadler's Theorems for free!); it's impossible to write an instance of Pointed which does not satisfy it ∗.

Applicative

A somewhat newer addition to the pantheon of standard Haskell type classes, applicative functors (see their haddock) represent an abstraction lying exactly in between Functor and Monad, first described by McBride and Paterson. The title of their classic paper, Applicative Programming with Effects, gives a hint at the intended intuition behind the Applicative type class. It encapsulates certain sorts of “effectful” computations in a functionally pure way, and encourages an “applicative” programming style. Exactly what these things mean will be seen later.

Definition

The Applicative class adds a single capability to Pointed functors. Recall that Functor allows us to lift a “normal” function to a function on computational contexts. But fmap doesn't allow us to apply a function which is itself in a context to a value in another context. Applicative gives us just such a tool. Here is the type class declaration for Applicative, as defined in Control.Applicative:

class Functor f => Applicative f where

pure :: a -> f a

(<*>) :: f (a -> b) -> f a -> f b

Note that every Applicative must also be a Functor. In fact, as we will see, fmap can be implemented using the Applicative methods, so every Applicative is a functor whether we like it or not; the Functor constraint forces us to be honest.

∗ Recall that ($) is just function application: f $ x = f x.

As always, it's crucial to understand the type signature of (<*>). The best way of thinking about it comes from noting that the type of (<*>) is similar to the type of ($) ∗, but with everything enclosed in an f. In other words, (<*>) is just function application within a computational context. The type of (<*>) is also very similar to the type of fmap; the only difference is that the first parameter is f (a -> b), a function in a context, instead of a “normal” function (a -> b).

Of course, pure looks rather familiar. If we actually had a Pointed type class, Applicative could instead be defined as:

class Pointed f => Applicative' f where

(<*>) :: f (a -> b) -> f a -> f b

Laws

∗ See haddock for Applicative, Applicative programming with effects

There are several laws that Applicative instances should satisfy ∗, but only one is crucial to developing intuition, because it specifies how Applicative should relate to Functor (the other four mostly specify the exact sense in which pure deserves its name). This law is:

fmap g x = pure g <*> x

It says that mapping a pure function g over a context x is the same as first injecting g into a context with pure, and then applying it to x with (<*>). In other words, we can decompose fmap into two more atomic operations: injection into a context, and application within a context. The Control.Applicative module also defines (<$>) as a synonym for fmap, so the above law can also be expressed as:

g <$> x = pure g <*> x.

Instances

Most of the standard types which are instances of Functor are also instances of Applicative.

Maybe can easily be made an instance of Applicative; writing such an instance is left as an exercise for the reader.

The list type constructor [] can actually be made an instance of Applicative in two ways; essentially, it comes down to whether we want to think of lists as ordered collections of elements, or as contexts representing multiple results of a nondeterministic computation (see Wadler's How to replace failure by a list of successes).

Let's first consider the collection point of view. Since there can only be one instance of a given type class for any particular type, one or both of the list instances of Applicative need to be defined for a newtype wrapper; as it happens, the nondeterministic computation instance is the default, and the collection instance is defined in terms of a newtype called ZipList. This instance is:

newtype ZipList a = ZipList { getZipList :: [a] }

instance Applicative ZipList where

pure = undefined -- exercise

(ZipList gs) <*> (ZipList xs) = ZipList (zipWith ($) gs xs)

To apply a list of functions to a list of inputs with (<*>), we just match up the functions and inputs elementwise, and produce a list of the resulting outputs. In other words, we “zip” the lists together with function application, ($); hence the name ZipList. As an exercise, determine the correct definition of pure—there is only one implementation that satisfies the law (see section “Laws”).

The other Applicative instance for lists, based on the nondeterministic computation point of view, is:

instance Applicative [] where

pure x = [x]

gs <*> xs = [ g x | g <- gs, x <- xs ]

Instead of applying functions to inputs pairwise, we apply each function to all the inputs in turn, and collect all the results in a list.

Now we can write nondeterministic computations in a natural style. To add the numbers 3 and 4 deterministically, we can of course write (+) 3 4. But suppose instead of 3 we have a nondeterministic computation that might result in 2, 3, or 4; then we can write

pure (+) <*> [2,3,4] <*> pure 4

or, more idiomatically,

(+) <$> [2,3,4] <*> pure 4.

There are several other Applicative instances as well:

IOis an instance ofApplicative, and behaves exactly as you would think: wheng <$> m1 <*> m2 <*> m3is executed, the effects from themi's happen in order from left to right.

((,) a)is anApplicative, as long asais an instance ofMonoid(section Monoid). Theavalues are accumulated in parallel with the computation.

- The

Applicativemodule defines theConsttype constructor; a value of typeConst a bsimply contains ana. This is an instance ofApplicativefor anyMonoid a; this instance becomes especially useful in conjunction with things likeFoldable(section Foldable).

- The

WrappedMonadandWrappedArrownewtypes make any instances ofMonad(section Monad) orArrow(section Arrow) respectively into instances ofApplicative; as we will see when we study those type classes, both are strictly more expressive thanApplicative, in the sense that theApplicativemethods can be implemented in terms of their methods.

Intuition

McBride and Paterson's paper introduces the notation to denote function application in a computational context. If each has type for some applicative functor , and has type , then the entire expression has type . You can think of this as applying a function to multiple “effectful” arguments. In this sense, the double bracket notation is a generalization of fmap, which allows us to apply a function to a single argument in a context.

Why do we need Applicative to implement this generalization of fmap? Suppose we use fmap to apply g to the first parameter x1. Then we get something of type f (t2 -> ... t), but now we are stuck: we can't apply this function-in-a-context to the next argument with fmap. However, this is precisely what (<*>) allows us to do.

This suggests the proper translation of the idealized notation into Haskell, namely

g <$> x1 <*> x2 <*> ... <*> xn,

recalling that Control.Applicative defines (<$>) as convenient infix shorthand for fmap. This is what is meant by an “applicative style”—effectful computations can still be described in terms of function application; the only difference is that we have to use the special operator (<*>) for application instead of simple juxtaposition.

Further reading

There are many other useful combinators in the standard libraries implemented in terms of pure and (<*>): for example, (*>), (<*), (<**>), (<$), and so on (see haddock for Applicative). Judicious use of such secondary combinators can often make code using Applicatives much easier to read.

McBride and Paterson's original paper is a treasure-trove of information and examples, as well as some perspectives on the connection between Applicative and category theory. Beginners will find it difficult to make it through the entire paper, but it is extremely well-motivated—even beginners will be able to glean something from reading as far as they are able.

Conal Elliott has been one of the biggest proponents of Applicative. For example, the Pan library for functional images and the reactive library for functional reactive programming (FRP) make key use of it; his blog also contains many examples of Applicative in action. Building on the work of McBride and Paterson, Elliott also built the TypeCompose library, which embodies the observation (among others) that Applicative types are closed under composition; therefore, Applicative instances can often be automatically derived for complex types built out of simpler ones.

Although the Parsec parsing library (paper) was originally designed for use as a monad, in its most common use cases an Applicative instance can be used to great effect; Bryan O'Sullivan's blog post is a good starting point. If the extra power provided by Monad isn't needed, it's usually a good idea to use Applicative instead.

A couple other nice examples of Applicative in action include the ConfigFile and HSQL libraries and the formlets library.

Monad

It's a safe bet that if you're reading this article, you've heard of monads—although it's quite possible you've never heard of Applicative before, or Arrow, or even Monoid. Why are monads such a big deal in Haskell? There are several reasons.

- Haskell does, in fact, single out monads for special attention by making them the framework in which to construct I/O operations.

- Haskell also singles out monads for special attention by providing a special syntactic sugar for monadic expressions: the

do-notation. Monadhas been around longer than various other abstract models of computation such asApplicativeorArrow.- The more monad tutorials there are, the harder people think monads must be, and the more new monad tutorials are written by people who think they finally “get” monads (the monad tutorial fallacy).

I will let you judge for yourself whether these are good reasons.

In the end, despite all the hoopla, Monad is just another type class. Let's take a look at its definition.

Definition

The type class declaration for Monad (haddock) is:

class Monad m where

return :: a -> m a

(>>=) :: m a -> (a -> m b) -> m b

(>>) :: m a -> m b -> m b

m >> n = m >>= \_ -> n

fail :: String -> m a

The Monad type class is exported by the Prelude, along with a few standard instances. However, many utility functions are found in Control.Monad, and there are also several instances (such as ((->) e)) defined in Control.Monad.Instances.

Let's examine the methods in the Monad class one by one. The type of return should look familiar; it's the same as pure. Indeed, return is pure, but with an unfortunate name. (Unfortunate, since someone coming from an imperative programming background might think that return is like the C or Java keyword of the same name, when in fact the similarities are minimal.) From a mathematical point of view, every monad is a pointed functor (indeed, an applicative functor), but for historical reasons, the Monad type class declaration unfortunately does not require this.

We can see that (>>) is a specialized version of (>>=), with a default implementation given. It is only included in the type class declaration so that specific instances of Monad can override the default implementation of (>>) with a more efficient one, if desired. Also, note that although _ >> n = n would be a type-correct implementation of (>>), it would not correspond to the intended semantics: the intention is that m >> n ignores the result of m, but not its effects.

The fail function is an awful hack that has no place in the Monad class; more on this later.

The only really interesting thing to look at—and what makes Monad strictly more powerful than Pointed or Applicative—is (>>=), which is often called bind. An alternative definition of Monad could look like:

class Applicative m => Monad' m where

(>>=) :: m a -> (a -> m b) -> m b

We could spend a while talking about the intuition behind (>>=)—and we will. But first, let's look at some examples.

Instances

Even if you don't understand the intuition behind the Monad class, you can still create instances of it by just seeing where the types lead you. You may be surprised to find that this actually gets you a long way towards understanding the intuition; at the very least, it will give you some concrete examples to play with as you read more about the Monad class in general. The first few examples are from the standard Prelude; the remaining examples are from the monad transformer library (mtl).

- The simplest possible instance of

MonadisIdentity(see haddock), which is described in Dan Piponi's highly recommended blog post on The Trivial Monad. Despite being “trivial,” it is a great introduction to theMonadtype class, and contains some good exercises to get your brain working. - The next simplest instance of

MonadisMaybe. We already know how to writereturn/pureforMaybe. So how do we write(>>=)? Well, let's think about its type. Specializing forMaybe, we have

(>>=) :: Maybe a -> (a -> Maybe b) -> Maybe b.

- If the first argument to

(>>=)isJust x, then we have something of typea(namely,x), to which we can apply the second argument—resulting in aMaybe b, which is exactly what we wanted. What if the first argument to(>>=)isNothing? In that case, we don't have anything to which we can apply thea -> Maybe bfunction, so there's only one thing we can do: yieldNothing. This instance is:

instance Monad Maybe where

return = Just

(Just x) >>= g = g x

Nothing >>= _ = Nothing

- We can already get a bit of intuition as to what is going on here: if we build up a computation by chaining together a bunch of functions with

(>>=), as soon as any one of them fails, the entire computation will fail (becauseNothing >>= fisNothing, no matter whatfis). The entire computation succeeds only if all the constituent functions individually succeed. So theMaybemonad models computations which may fail.

- The

Monadinstance for the list constructor[]is similar to itsApplicativeinstance; I leave its implementation as an exercise. Follow the types!

- Of course, the

IOconstructor is famously aMonad, but its implementation is somewhat magical, and may in fact differ from compiler to compiler. It is worth emphasizing that theIOmonad is the only monad which is magical. It allows us to build up, in an entirely pure way, values representing possibly effectful computations. The special valuemain, of typeIO (), is taken by the runtime and actually executed, producing actual effects. Every other monad is functionally pure, and requires no special compiler support. We often speak of monadic values as “effectful computations,” but this is because some monads allow us to write code as if it has side effects, when in fact the monad is hiding the plumbing which allows these apparent side effects to be implemented in a functionally pure way.

- As mentioned earlier,

((->) e)is known as the reader monad, since it describes computations in which a value of typeeis available as a read-only environment. It is worth trying to write aMonadinstance for((->) e)yourself.

- The

Control.Monad.Readermodule (haddock) provides theReader e atype, which is just a convenientnewtypewrapper around(e -> a), along with an appropriateMonadinstance and someReader-specific utility functions such asask(retrieve the environment),asks(retrieve a function of the environment), andlocal(run a subcomputation under a different environment).

- The

Control.Monad.Writermodule (haddock) provides theWritermonad, which allows information to be collected as a computation progresses.Writer w ais isomorphic to(a,w), where the output valueais carried along with an annotation or “log” of typew, which must be an instance ofMonoid(section Monoid); the special functiontellperforms logging.

- The

Control.Monad.Statemodule (haddock) provides theState s atype, anewtypewrapper arounds -> (a,s). Something of typeState s arepresents a stateful computation which produces anabut can access and modify the state of typesalong the way. The module also providesState-specific utility functions such asget(read the current state),gets(read a function of the current state),put(overwrite the state), andmodify(apply a function to the state).

- The

Control.Monad.Contmodule (haddock) provides theContmonad, which represents computations in continuation-passing style. It can be used to suspend and resume computations, and to implement non-local transfers of control, co-routines, other complex control structures—all in a functionally pure way.Conthas been called the “mother of all monads” because of its universal properties.

Intuition

Let's look more closely at the type of (>>=). The basic intuition is that it combines two computations into one larger computation. The first argument, m a, is the first computation. However, it would be boring if the second argument were just an m b; then there would be no way for the computations to interact with one another. So, the second argument to (>>=) has type a -> m b: a function of this type, given a result of the first computation, can produce a second computation to be run. In other words, x >>= k is a computation which runs x, and then uses the result(s) of x to decide what computation to run second, using the output of the second computation as the result of the entire computation.

Intuitively, it is this ability to use the output from previous computations to decide what computations to run next that makes Monad more powerful than Applicative. The structure of an Applicative computation is fixed, whereas the structure of a Monad computation can change based on intermediate results.

To see the increased power of Monad from a different point of view, let's see what happens if we try to implement (>>=) in terms of fmap, pure, and (<*>). We are given a value x of type m a, and a function k of type a -> m b, so the only thing we can do is apply k to x. We can't apply it directly, of course; we have to use fmap to lift it over the m. But what is the type of fmap k? Well, it's m a -> m (m b). So after we apply it to x, we are left with something of type m (m b)—but now we are stuck; what we really want is an m b, but there's no way to get there from here. We can add m's using pure, but we have no way to collapse multiple m's into one.

This ability to collapse multiple m's is exactly the ability provided by the function join :: m (m a) -> m a, and it should come as no surprise that an alternative definition of Monad can be given in terms of join:

class Applicative m => Monad'' m where

join :: m (m a) -> m a

In fact, monads in category theory are defined in terms of return, fmap, and join (often called , , and in the mathematical literature). Haskell uses the equivalent formulation in terms of (>>=) instead of join since it is more convenient to use; however, sometimes it can be easier to think about Monad instances in terms of join, since it is a more “atomic” operation. (For example, join for the list monad is just concat.) An excellent exercise is to implement (>>=) in terms of fmap and join, and to implement join in terms of (>>=).

Utility functions

The Control.Monad module (haddock) provides a large number of convenient utility functions, all of which can be implemented in terms of the basic Monad operations (return and (>>=) in particular). We have already seen one of them, namely, join. We also mention some other noteworthy ones here; implementing these utility functions oneself is a good exercise. For a more detailed guide to these functions, with commentary and example code, see Henk-Jan van Tuyl's tour.

liftM :: Monad m => (a -> b) -> m a -> m b. This should be familiar; of course, it is justfmap. The fact that we have bothfmapandliftMis an unfortunate consequence of the fact that theMonadtype class does not require aFunctorinstance, even though mathematically speaking, every monad is a functor. However,fmapandliftMare essentially interchangeable, since it is a bug (in a social rather than technical sense) for any type to be an instance ofMonadwithout also being an instance ofFunctor.

ap :: Monad m => m (a -> b) -> m a -> m bshould also be familiar: it is equivalent to(<*>), justifying the claim that theMonadinterface is strictly more powerful thanApplicative. We can make anyMonadinto an instance ofApplicativeby settingpure = returnand(<*>) = ap.

sequence :: Monad m => [m a] -> m [a]takes a list of computations and combines them into one computation which collects a list of their results. It is again something of a historical accident thatsequencehas aMonadconstraint, since it can actually be implemented only in terms ofApplicative. There is also an additional generalization ofsequenceto structures other than lists, which will be discussed in the section onTraversable.

replicateM :: Monad m => Int -> m a -> m [a]is simply a combination ofreplicateandsequence.

when :: Monad m => Bool -> m () -> m ()conditionally executes a computation, evaluating to its second argument if the test isTrue, and toreturn ()if the test isFalse. A collection of other sorts of monadic conditionals can be found in the IfElse package.

mapM :: Monad m => (a -> m b) -> [a] -> m [b]maps its first argument over the second, andsequences the results. TheforMfunction is justmapMwith its arguments reversed; it is calledforMsince it models generalizedforloops: the list[a]provides the loop indices, and the functiona -> m bspecifies the “body” of the loop for each index.

(=<<) :: Monad m => (a -> m b) -> m a -> m bis just(>>=)with its arguments reversed; sometimes this direction is more convenient since it corresponds more closely to function application.

(>=>) :: Monad m => (a -> m b) -> (b -> m c) -> a -> m cis sort of like function composition, but with an extramon the result type of each function, and the arguments swapped. We'll have more to say about this operation later.

- The

guardfunction is for use with instances ofMonadPlus, which is discussed at the end of theMonoidsection.

Many of these functions also have “underscored” variants, such as sequence_ and mapM_; these variants throw away the results of the computations passed to them as arguments, using them only for their side effects.

Laws

There are several laws that instances of Monad should satisfy Monad laws. The standard presentation is:

return a >>= k = k a

m >>= return = m

m >>= (\x -> k x >>= h) = (m >>= k) >>= h

fmap f xs = xs >>= return . f = liftM f xs

The first and second laws express the fact that return behaves nicely: if we inject a value a into a monadic context with return, and then bind to k, it is the same as just applying k to a in the first place; if we bind a computation m to return, nothing changes. The third law essentially says that (>>=) is associative, sort of. The last law ensures that fmap and liftM are the same for types which are instances of both Functor and Monad—which, as already noted, should be every instance of Monad.

∗ I like to pronounce this operator “fish,” but that's probably not the canonical pronunciation ...

However, the presentation of the above laws, especially the third, is marred by the asymmetry of (>>=). It's hard to look at the laws and see what they're really saying. I prefer a much more elegant version of the laws, which is formulated in terms of (>=>) ∗. Recall that (>=>) “composes” two functions of type a -> m b and b -> m c. You can think of something of type a -> m b (roughly) as a function from a to b which may also have some sort of effect in the context corresponding to m. (Note that return is such a function.) (>=>) lets us compose these “effectful functions,” and we would like to know what properties (>=>) has. The monad laws reformulated in terms of (>=>) are:

return >=> g = g

g >=> return = g

(g >=> h) >=> k = g >=> (h >=> k)

∗ As fans of category theory will note, these laws say precisely that functions of type a -> m b are the arrows of a category with (>=>) as composition! Indeed, this is known as the Kleisli category of the monad m. It will come up again when we discuss Arrows.

Ah, much better! The laws simply state that return is the identity of (>=>), and that (>=>) is associative ∗. Working out the equivalence between these two formulations, given the definition g >=> h = \x -> g x >>= h, is left as an exercise.

There is also a formulation of the monad laws in terms of fmap, return, and join; for a discussion of this formulation, see the Haskell wikibook page on category theory.

do notation

Haskell's special do notation supports an “imperative style” of programming by providing syntactic sugar for chains of monadic expressions. The genesis of the notation lies in realizing that something like a >>= \x -> b >> c >>= \y -> d can be more readably written by putting successive computations on separate lines:

a >>= \x ->

b >>

c >>= \y ->

d

This emphasizes that the overall computation consists of four computations a, b, c, and d, and that x is bound to the result of a, and y is bound to the result of c (b, c, and d are allowed to refer to x, and d is allowed to refer to y as well). From here it is not hard to imagine a nicer notation:

do { x <- a ;

b ;

y <- c ;

d

}

(The curly braces and semicolons may optionally be omitted; the Haskell parser uses layout to determine where they should be inserted.) This discussion should make clear that do notation is just syntactic sugar. In fact, do blocks are recursively translated into monad operations (almost) like this:

do e ⇨ e

do { e; stmts } ⇨ e >> do { stmts }

do { v <- e; stmts } ⇨ e >>= \v -> do { stmts }

do { let decls; stmts} ⇨ let decls in do { stmts }

This is not quite the whole story, since v might be a pattern instead of a variable. For example, one can write

do (x:xs) <- foo

bar x

but what happens if foo produces an empty list? Well, remember that ugly fail function in the Monad type class declaration? That's what happens. See section 3.14 of the Haskell Report for the full details. See also the discussion of MonadPlus and MonadZero in the section on other monoidal classes.

A final note on intuition: do notation plays very strongly to the “computational context” point of view rather than the “container” point of view, since the binding notation x <- m is suggestive of “extracting” a single x from m and doing something with it. But m may represent some sort of a container, such as a list or a tree; the meaning of x <- m is entirely dependent on the implementation of (>>=). For example, if m is a list, x <- m actually means that x will take on each value from the list in turn.

Monad transformers

One would often like to be able to combine two monads into one: for example, to have stateful, nondeterministic computations (State + []), or computations which may fail and can consult a read-only environment (Maybe + Reader), and so on. Unfortunately, monads do not compose as nicely as applicative functors (yet another reason to use Applicative if you don't need the full power that Monad provides), but some monads can be combined in certain ways.

The monad transformer library mtl provides a number of monad transformers, such as StateT, ReaderT, ErrorT (haddock), and (soon) MaybeT, which can be applied to other monads to produce a new monad with the effects of both. For example, StateT s Maybe is an instance of Monad; computations of type StateT s Maybe a may fail, and have access to a mutable state of type s. These transformers can be multiply stacked. One thing to keep in mind while using monad transformers is that the order of composition matters. For example, when a StateT s Maybe a computation fails, the state ceases being updated; on the other hand, the state of a MaybeT (State s) a computation may continue to be modified even after the computation has failed. (This may seem backwards, but it is correct. Monad transformers build composite monads “inside out”; for example, MaybeT (State s) a is isomorphic to s -> Maybe (a, s). Lambdabot has an indispensable @unmtl command which you can use to “unpack” a monad transformer stack in this way.)

All monad transformers should implement the MonadTrans type class, defined in Control.Monad.Trans:

class MonadTrans t where

lift :: Monad m => m a -> t m a

It allows arbitrary computations in the base monad m to be “lifted” into computations in the transformed monad t m. (Note that type application associates to the left, just like function application, so t m a = (t m) a. As an exercise, you may wish to work out t's kind, which is rather more interesting than most of the kinds we've seen up to this point.) However, you should only have to think about MonadTrans when defining your own monad transformers, not when using predefined ones.

∗ The only problem with this scheme is the quadratic number of instances required as the number of standard monad transformers grows—but as the current set of standard monad transformers seems adequate for most common use cases, this may not be that big of a deal.

There are also type classes such as MonadState, which provides state-specific methods like get and put, allowing you to conveniently use these methods not only with State, but with any monad which is an instance of MonadState—including MaybeT (State s), StateT s (ReaderT r IO), and so on. Similar type classes exist for Reader, Writer, Cont, IO, and others ∗.

There are two excellent references on monad transformers. Martin Grabmüller's Monad Transformers Step by Step is a thorough description, with running examples, of how to use monad transformers to elegantly build up computations with various effects. Cale Gibbard's article on how to use monad transformers is more practical, describing how to structure code using monad transformers to make writing it as painless as possible. Another good starting place for learning about monad transformers is a blog post by Dan Piponi.

MonadFix

The MonadFix class describes monads which support the special fixpoint operation mfix :: (a -> m a) -> m a, which allows the output of monadic computations to be defined via recursion. This is supported in GHC and Hugs by a special “recursive do” notation, mdo. For more information, see Levent Erkök's thesis, Value Recursion in Monadic Computations.

Further reading

Philip Wadler was the first to propose using monads to structure functional programs. His paper is still a readable introduction to the subject.

Much of the monad transformer library mtl, including the Reader, Writer, State, and other monads, as well as the monad transformer framework itself, was inspired by Mark Jones's classic paper Functional Programming with Overloading and Higher-Order Polymorphism. It's still very much worth a read—and highly readable—after almost fifteen years.

∗ {{{1}}}

There are, of course, numerous monad tutorials of varying quality ∗.

A few of the best include Cale Gibbard's Monads as containers and Monads as computation; Jeff Newbern's All About Monads, a comprehensive guide with lots of examples; and Dan Piponi's You Could Have Invented Monads!, which features great exercises. If you just want to know how to use IO, you could consult the Introduction to IO. Even this is just a sampling; the monad tutorials timeline is a more complete list. (All these monad tutorials have prompted parodies like think of a monad ... as well as other kinds of backlash like Monads! (and Why Monad Tutorials Are All Awful) or Abstraction, intuition, and the “monad tutorial fallacy”.)

Other good monad references which are not necessarily tutorials include Henk-Jan van Tuyl's tour of the functions in Control.Monad, Dan Piponi's field guide, and Tim Newsham's What's a Monad?. There are also many blog articles which have been written on various aspects of monads; a collection of links can be found under Blog articles/Monads.

One of the quirks of the Monad class and the Haskell type system is that it is not possible to straightforwardly declare Monad instances for types which require a class constraint on their data, even if they are monads from a mathematical point of view. For example, Data.Set requires an Ord constraint on its data, so it cannot be easily made an instance of Monad. A solution to this problem was first described by Eric Kidd, and later made into a library named rmonad by Ganesh Sittampalam and Peter Gavin.

There are many good reasons for eschewing do notation; some have gone so far as to [[Do_notation_considered_harmful|consider it harmful].

Monads can be generalized in various ways; for an exposition of one possibility, see Robert Atkey's paper on parameterized monads, or Dan Piponi's Beyond Monads.

For the categorically inclined, monads can be viewed as monoids (From Monoids to Monads) and also as closure operators Triples and Closure. Derek Elkins's article in issue 13 of the Monad.Reader contains an exposition of the category-theoretic underpinnings of some of the standard Monad instances, such as State and Cont. There is also an alternative way to compose monads, using coproducts, as described by Lüth and Ghani, although this method has not (yet?) seen widespread use.

Links to many more research papers related to monads can be found under Research papers/Monads and arrows.

Monoid

A monoid is a set together with a binary operation which combines elements from . The operator is required to be associative (that is, , for any which are elements of ), and there must be some element of which is the identity with respect to . (If you are familiar with group theory, a monoid is like a group without the requirement that inverses exist.) For example, the natural numbers under addition form a monoid: the sum of any two natural numbers is a natural number; for any natural numbers , , and ; and zero is the additive identity. The integers under multiplication also form a monoid, as do natural numbers under , Boolean values under conjunction and disjunction, lists under concatenation, functions from a set to itself under composition ... Monoids show up all over the place, once you know to look for them.

Definition

The definition of the Monoid type class (defined in

Data.Monoid; haddock) is:

class Monoid a where

mempty :: a

mappend :: a -> a -> a

mconcat :: [a] -> a

mconcat = foldr mappend mempty

The mempty value specifies the identity element of the monoid, and mappend

is the binary operation. The default definition for mconcat

“reduces” a list of elements by combining them all with mappend,

using a right fold. It is only in the Monoid class so that specific

instances have the option of providing an alternative, more efficient

implementation; usually, you can safely ignore mconcat when creating

a Monoid instance, since its default definition will work just fine.

The Monoid methods are rather unfortunately named; they are inspired

by the list instance of Monoid, where indeed mempty = [] and mappend = (++), but this is misleading since many

monoids have little to do with appending (see these Comments from OCaml Hacker Brian Hurt on the haskell-cafe mailing list).

Laws

Of course, every Monoid instance should actually be a monoid in the

mathematical sense, which implies these laws:

mempty `mappend` x = x

x `mappend` mempty = x

(x `mappend` y) `mappend` z = x `mappend` (y `mappend` z)

Instances

There are quite a few interesting Monoid instances defined in

Data.Monoid.

[a]is aMonoid, withmempty = []andmappend = (++).

It is not hard to check that

(x ++ y) ++ z = x ++ (y ++ z) for any lists x, y, and z, and

that the empty list is the identity:

[] ++ x = x ++ [] = x.

- As noted previously, we can make a monoid out of any numeric

type under either addition or multiplication. However, since we

can't have two instances for the same type, Data.Monoid provides

two newtype wrappers, Sum and Product, with appropriate

Monoid instances.

> getSum (mconcat . map Sum $ [1..5])

15

> getProduct (mconcat . map Product $ [1..5])

120

- This example code is silly, of course; we could just write

sum [1..5] and product [1..5]. Nevertheless, these instances

are useful in more generalized settings, as we will see in the

section Foldable.

AnyandAllarenewtypewrappers providingMonoid

instances for Bool (under disjunction and conjunction,

respectively).

- There are three instances for

Maybe: a basic instance which

lifts a Monoid instance for a to an instance for Maybe a, and

two newtype wrappers First and Last for which mappend

selects the first (respectively last) non-Nothing item.

Endo ais a newtype wrapper for functionsa -> a, which form

a monoid under composition.

- There are several ways to “lift”

Monoidinstances to

instances with additional structure. We have already seen that an

instance for a can be lifted to an instance for Maybe a. There

are also tuple instances: if a and b are instances of Monoid,

then so is (a,b), using the monoid operations for a and b in

the obvious pairwise manner. Finally, if a is a Monoid, then so

is the function type e -> a for any e; in particular,

g `mappend` h is the function which applies both g and h to

its argument and then combines the result using the underlying

Monoid instance for a. This can be quite useful and

elegant (see example).

- The type

Ordering = LT || EQ || GTis aMonoid, defined in

such a way that

mconcat (zipWith compare xs ys) computes the

lexicographic ordering of xs and ys. In particular,

mempty = EQ, and mappend evaluates to its leftmost non-EQ

argument (or EQ if both arguments are EQ). This can be used

together with the function instance of Monoid to do some clever

things

(example).

- There are also

Monoidinstances for several standard data

structures in the containers library (haddock),

including Map, Set, and Sequence.

Monoid is also used to enable several other type class instances.

As noted previously, we can use Monoid to make ((,) e) an instance

of Applicative:

instance Monoid e => Applicative ((,) e) where

pure x = (mempty, x)

(u, f) <*> (v, x) = (u `mappend` v, f x)

Monoid can be similarly used to make ((,) e) an instance of

Monad as well; this is known as the writer monad. As we've

already seen, Writer and WriterT are a newtype wrapper and

transformer for this monad, respectively.

Monoid also plays a key role in the Foldable type class

(see section Foldable).

Other monoidal classes: Alternative, MonadPlus, ArrowPlus

The Alternative type class (haddock)

is for Applicative functors which also have

a monoid structure:

class Applicative f => Alternative f where

empty :: f a

(<|>) :: f a -> f a -> f a

Of course, instances of Alternative should satisfy the monoid laws.

Likewise, MonadPlus (haddock)

is for Monads with a monoid structure:

class Monad m => MonadPlus m where

mzero :: m a

mplus :: m a -> m a -> m a

The MonadPlus documentation states that it is intended to model

monads which also support “choice and failure”; in addition to the

monoid laws, instances of MonadPlus are expected to satisfy

mzero >>= f = mzero

v >> mzero = mzero

which explains the sense in which mzero denotes failure. Since

mzero should be the identity for mplus, the computation m1 `mplus` m2 succeeds (evaluates to something other than mzero) if

either m1 or m2 does; so mplus represents choice. The guard

function can also be used with instances of MonadPlus; it requires a

condition to be satisfied and fails (using mzero) if it is not. A

simple example of a MonadPlus instance is [], which is exactly the

same as the Monoid instance for []: the empty list represents

failure, and list concatenation represents choice. In general,

however, a MonadPlus instance for a type need not be the same as its

Monoid instance; Maybe is an example of such a type. A great

introduction to the MonadPlus type class, with interesting examples

of its use, is Doug Auclair's MonadPlus: What a Super Monad! in the Monad.Reader issue 11.

There used to be a type class called MonadZero containing only

mzero, representing monads with failure. The do-notation requires

some notion of failure to deal with failing pattern matches.

Unfortunately, MonadZero was scrapped in favor of adding the fail

method to the Monad class. If we are lucky, someday MonadZero will

be restored, and fail will be banished to the bit bucket where it

belongs (see MonadPlus reform proposal). The idea is that any

do-block which uses pattern matching (and hence may fail) would require

a MonadZero constraint; otherwise, only a Monad constraint would be

required.

Finally, ArrowZero and ArrowPlus (haddock)

represent Arrows (see below) with a

monoid structure:

class Arrow (~>) => ArrowZero (~>) where

zeroArrow :: b ~> c

class ArrowZero (~>) => ArrowPlus (~>) where

(<+>) :: (b ~> c) -> (b ~> c) -> (b ~> c)

Further reading

Monoids have gotten a fair bit of attention recently, ultimately due

to

a blog post by Brian Hurt, in which he

complained about the fact that the names of many Haskell type classes

(Monoid in particular) are taken from abstract mathematics. This

resulted in a long haskell-cafe thread

arguing the point and discussing monoids in general.

∗ May its name live forever.

However, this was quickly followed by several blog posts about

Monoid ∗. First, Dan Piponi

wrote a great introductory post, [http://blog.sigfpe.com/2009/01/haskell-monoids-and-their-uses.html Haskell Monoids and their

Uses]. This was quickly followed by

Heinrich Apfelmus's Monoids and Finger Trees, an accessible exposition of

Hinze and Paterson's classic paper on 2-3 finger trees, which makes very clever

use of Monoid to implement an elegant and generic data structure.

Dan Piponi then wrote two fascinating articles about using Monoids

(and finger trees): Fast Incremental Regular Expressions and Beyond Regular Expressions

In a similar vein, David Place's article on improving Data.Map in

order to compute incremental folds (see the Monad Reader issue 11)

is also a

good example of using Monoid to generalize a data structure.

Some other interesting examples of Monoid use include [http://www.reddit.com/r/programming/comments/7cf4r/monoids_in_my_programming_language/c06adnx building

elegant list sorting combinators],

collecting unstructured information,

and a brilliant series of posts by Chung-Chieh Shan and Dylan Thurston

using Monoids to [http://conway.rutgers.edu/~ccshan/wiki/blog/posts/WordNumbers1/ elegantly solve a difficult combinatorial

puzzle] (followed by

part 2,

part 3,

part 4).

As unlikely as it sounds, monads can actually be viewed as a sort of

monoid, with join playing the role of the binary operation and

return the role of the identity; see Dan Piponi's blog post.

Foldable

The Foldable class, defined in the Data.Foldable

module (haddock), abstracts over containers which can be

“folded” into a summary value. This allows such folding operations

to be written in a container-agnostic way.

Definition

The definition of the Foldable type class is:

class Foldable t where

fold :: Monoid m => t m -> m

foldMap :: Monoid m => (a -> m) -> t a -> m

foldr :: (a -> b -> b) -> b -> t a -> b

foldl :: (a -> b -> a) -> a -> t b -> a

foldr1 :: (a -> a -> a) -> t a -> a

foldl1 :: (a -> a -> a) -> t a -> a

This may look complicated, but in fact, to make a Foldable instance

you only need to implement one method: your choice of foldMap or

foldr. All the other methods have default implementations in terms

of these, and are presumably included in the class in case more

efficient implementations can be provided.

Instances and examples

The type of foldMap should make it clear what it is supposed to do:

given a way to convert the data in a container into a Monoid (a

function a -> m) and a container of a's (t a), foldMap

provides a way to iterate over the entire contents of the container,

converting all the a's to m's and combining all the m's with

mappend. The following code shows two examples: a simple

implementation of foldMap for lists, and a binary tree example

provided by the Foldable documentation.

instance Foldable [] where

foldMap g = mconcat . map g

data Tree a = Empty | Leaf a | Node (Tree a) a (Tree a)

instance Foldable Tree where

foldMap f Empty = mempty

foldMap f (Leaf x) = f x

foldMap f (Node l k r) = foldMap f l ++ f k ++ foldMap f r

where (++) = mappend

The foldr function has a type similar to the foldr found in the Prelude, but

more general, since the foldr in the Prelude works only on lists.

The Foldable module also provides instances for Maybe and Array;

additionally, many of the data structures found in the standard containers library (for example, Map, Set, Tree,

and Sequence) provide their own Foldable instances.

Derived folds

Given an instance of Foldable, we can write generic,

container-agnostic functions such as:

-- Compute the size of any container.

containerSize :: Foldable f => f a -> Int

containerSize = getSum . foldMap (const (Sum 1))

-- Compute a list of elements of a container satisfying a predicate.

filterF :: Foldable f => (a -> Bool) -> f a -> [a]

filterF p = foldMap (\a -> if p a then [a] else [])

-- Get a list of all the Strings in a container which include the

-- letter a.

aStrings :: Foldable f => f String -> [String]

aStrings = filterF (elem 'a')

The Foldable module also provides a large number of predefined

folds, many of which are generalized versions of Prelude functions of the

same name that only work on lists: concat, concatMap, and,

or, any, all, sum, product, maximum(By),

minimum(By), elem, notElem, and find. The reader may enjoy

coming up with elegant implementations of these functions using fold

or foldMap and appropriate Monoid instances.

There are also generic functions that work with Applicative or

Monad instances to generate some sort of computation from each

element in a container, and then perform all the side effects from

those computations, discarding the results: traverse_, sequenceA_,

and others. The results must be discarded because the Foldable

class is too weak to specify what to do with them: we cannot, in

general, make an arbitrary Applicative or Monad instance into a

Monoid. If we do have an Applicative or Monad with a monoid

structure—that is, an Alternative or a MonadPlus—then we can

use the asum or msum functions, which can combine the results as

well. Consult the Foldable documentation for

more details on any of these functions.

Note that the Foldable operations always forget the structure of

the container being folded. If we start with a container of type t a for some Foldable t, then t will never appear in the output

type of any operations defined in the Foldable module. Many times

this is exactly what we want, but sometimes we would like to be able

to generically traverse a container while preserving its

structure—and this is exactly what the Traversable class provides,

which will be discussed in the next section.

Further reading

The Foldable class had its genesis in McBride and Paterson's paper

introducing Applicative, although it has

been fleshed out quite a bit from the form in the paper.

An interesting use of Foldable (as well as Traversable) can be

found in Janis Voigtländer's paper Bidirectionalization for free!.

Traversable

Definition

The Traversable type class, defined in the Data.Traversable

module (haddock), is:

class (Functor t, Foldable t) => Traversable t where

traverse :: Applicative f => (a -> f b) -> t a -> f (t b)

sequenceA :: Applicative f => t (f a) -> f (t a)

mapM :: Monad m => (a -> m b) -> t a -> m (t b)

sequence :: Monad m => t (m a) -> m (t a)

As you can see, every Traversable is also a foldable functor. Like

Foldable, there is a lot in this type class, but making instances is

actually rather easy: one need only implement traverse or

sequenceA; the other methods all have default implementations in

terms of these functions. A good exercise is to figure out what the default

implementations should be: given either traverse or sequenceA, how

would you define the other three methods? (Hint for mapM:

Control.Applicative exports the WrapMonad newtype, which makes any

Monad into an Applicative. The sequence function can be implemented in terms

of mapM.)

Intuition

The key method of the Traversable class, and the source of its

unique power, is sequenceA. Consider its type:

sequenceA :: Applicative f => t (f a) -> f (t a)

This answers the fundamental question: when can we commute two functors? For example, can we turn a tree of lists into a list of trees? (Answer: yes, in two ways. Figuring out what they are, and why, is left as an exercise. A much more challenging question is whether a list of trees can be turned into a tree of lists.)

The ability to compose two monads depends crucially on this ability to

commute functors. Intuitively, if we want to build a composed monad

M a = m (n a) out of monads m and n, then to be able to

implement join :: M (M a) -> M a, that is,

join :: m (n (m (n a))) -> m (n a), we have to be able to commute

the n past the m to get m (m (n (n a))), and then we can use the

joins for m and n to produce something of type m (n a). See

Mark Jones's paper for more details.

Instances and examples

What's an example of a Traversable instance?

The following code shows an example instance for the same

Tree type used as an example in the previous Foldable section. It

is instructive to compare this instance with a Functor instance for

Tree, which is also shown.

data Tree a = Empty | Leaf a | Node (Tree a) a (Tree a)

instance Traversable Tree where

traverse g Empty = pure Empty

traverse g (Leaf x) = Leaf <$> g x

traverse g (Node l x r) = Node <$> traverse g l

<*> g x

<*> traverse g r

instance Functor Tree where

fmap g Empty = Empty

fmap g (Leaf x) = Leaf $ g x

fmap g (Node l x r) = Node (fmap g l)

(g x)

(fmap g r)

It should be clear that the Traversable and Functor instances for

Tree are almost identical; the only difference is that the Functor

instance involves normal function application, whereas the

applications in the Traversable instance take place within an

Applicative context, using (<$>) and (<*>). In fact, this will

be

true for any type.

Any Traversable functor is also Foldable, and a Functor. We can see

this not only from the class declaration, but by the fact that we can

implement the methods of both classes given only the Traversable

methods. A good exercise is to implement fmap and foldMap using

only the Traversable methods; the implementations are surprisingly

elegant. The Traversable module provides these

implementations as fmapDefault and foldMapDefault.

The standard libraries provide a number of Traversable instances,

including instances for [], Maybe, Map, Tree, and Sequence.

Notably, Set is not Traversable, although it is Foldable.

Further reading

The Traversable class also had its genesis in [http://www.soi.city.ac.uk/~ross/papers/Applicative.html McBride and Paterson's

Applicative paper], and is described in

more detail in Gibbons and Oliveira, The Essence of the Iterator Pattern, which also contains a wealth of

references to related work.

Category

Category is another fairly new addition to the Haskell standard

libraries; you may or may not have it installed depending on the

version of your base package. It generalizes the notion of

function composition to general “morphisms.”

The definition of the Category type class (from

Control.Category—haddock) is shown below. For ease of reading, note that I have used an

infix type constructor (~>), much like the infix function type

constructor (->). This syntax is not part of Haskell 98.

The second definition shown is the one used in the standard libraries.

For the remainder of the article, I will use the infix type

constructor (~>) for Category as well as Arrow.

class Category (~>) where

id :: a ~> a

(.) :: (b ~> c) -> (a ~> b) -> (a ~> c)

-- The same thing, with a normal (prefix) type constructor

class Category cat where

id :: cat a a

(.) :: cat b c -> cat a b -> cat a c

Note that an instance of Category should be a type constructor which

takes two type arguments, that is, something of kind * -> * -> *. It

is instructive to imagine the type constructor variable cat replaced

by the function constructor (->): indeed, in this case we recover

precisely the familiar identity function id and function composition

operator (.) defined in the standard Prelude.

Of course, the Category module provides exactly such an instance of

Category for (->). But it also provides one other instance, shown

below, which should be familiar from the

previous discussion of the Monad laws. Kleisli m a b, as defined

in the Control.Arrow module, is just a newtype wrapper around a -> m b.

newtype Kleisli m a b = Kleisli { runKleisli :: a -> m b }

instance Monad m => Category (Kleisli m) where

id = Kleisli return

Kleisli g . Kleisli h = Kleisli (h >=> g)

The only law that Category instances should satisfy is that id and

(.) should form a monoid—that is, id should be the identity of

(.), and (.) should be associative.

Finally, the Category module exports two additional operators:

(<<<), which is just a synonym for (.), and (>>>), which is

(.) with its arguments reversed. (In previous versions of the

libraries, these operators were defined as part of the Arrow class.)

Further reading

The name Category is a bit misleading, since the Category class

cannot represent arbitrary categories, but only categories whose

objects are objects of Hask, the category of Haskell types. For a

more general treatment of categories within Haskell, see the

category-extras package. For more about

category theory in general, see the excellent Haskell wikibook page,

Steve Awodey's new book,

Benjamin Pierce's

Basic category theory for computer scientists, or

Barr and Wells's category theory lecture notes. Benjamin Russell's blog post

is another good source of motivation and

category theory links. You certainly don't need to know any category

theory to be a successful and productive Haskell programmer, but it

does lend itself to much deeper appreciation of Haskell's underlying

theory.

Arrow

The Arrow class represents another abstraction of computation, in a

similar vein to Monad and Applicative. However, unlike Monad

and Applicative, whose types only reflect their output, the type of

an Arrow computation reflects both its input and output. Arrows

generalize functions: if (~>) is an instance of Arrow, a value of

type b ~> c can be thought of as a computation which takes values of

type b as input, and produces values of type c as output. In the

(->) instance of Arrow this is just a pure function; in general, however,

an arrow may represent some sort of “effectful” computation.

Definition

The definition of the Arrow type class, from

Control.Arrow (haddock), is:

class Category (~>) => Arrow (~>) where

arr :: (b -> c) -> (b ~> c)

first :: (b ~> c) -> ((b, d) ~> (c, d))

second :: (b ~> c) -> ((d, b) ~> (d, c))

(***) :: (b ~> c) -> (b' ~> c') -> ((b, b') ~> (c, c'))

(&&&) :: (b ~> c) -> (b ~> c') -> (b ~> (c, c'))

∗ In versions of the base

package prior to version 4, there is no Category class, and the

Arrow class includes the arrow composition operator (>>>). It

also includes pure as a synonym for arr, but this was removed

since it conflicts with the pure from Applicative.

The first thing to note is the Category class constraint, which

means that we get identity arrows and arrow composition for free:

given two arrows g :: b ~> c and h :: c ~> d, we can form their

composition g >>> h :: b ~> d ∗.

As should be a familiar pattern by now, the only methods which must be

defined when writing a new instance of Arrow are arr and first;

the other methods have default definitions in terms of these, but are

included in the Arrow class so that they can be overridden with more

efficient implementations if desired.

Intuition

Let's look at each of the arrow methods in turn. Ross Paterson's web page on arrows has nice diagrams which can help build intuition.

- The

arrfunction takes any functionb -> cand turns it into a

generalized arrow b ~> c. The arr method justifies the claim

that arrows generalize functions, since it says that we can treat

any function as an arrow. It is intended that the arrow arr g is

“pure” in the sense that it only computes g and has no

“effects” (whatever that might mean for any particular arrow type).

- The

firstmethod turns any arrow frombtocinto an arrow

from (b,d) to (c,d). The idea is that first g uses g to

process the first element of a tuple, and lets the second element pass

through unchanged. For the function instance of Arrow, of course,

first g (x,y) = (g x, y).

- The

secondfunction is similar tofirst, but with the elements of the

tuples swapped. Indeed, it can be defined in terms of first using

an auxiliary function swap, defined by swap (x,y) = (y,x).

- The

(***)operator is “parallel composition” of arrows: it takes two

arrows and makes them into one arrow on tuples, which has the

behavior of the first arrow on the first element of a tuple, and the

behavior of the second arrow on the second element. The mnemonic

is that g *** h is the product (hence *) of g and

h. For the function instance of Arrow,

we define (g *** h) (x,y) = (g x, h y). The default implementation of

(***) is in terms of first, second, and sequential arrow

composition (>>>). The reader may also wish to think about how to

implement first and second in terms of (***).

- The

(&&&)operator is “fanout composition” of arrows: it takes two arrows

g and h and makes them into a new arrow g &&& h which supplies

its input as the input to both g and h, returning their results

as a tuple. The mnemonic is that g &&& h performs both g

and h (hence &) on its input. For functions, we define (g &&& h) x = (g x, h x).

Instances

The Arrow library itself only provides two Arrow instances, both

of which we have already seen: (->), the normal function

constructor, and Kleisli m, which makes functions of

type a -> m b into Arrows for any Monad m. These instances are:

instance Arrow (->) where

arr g = g

first g (x,y) = (g x, y)

newtype Kleisli m a b = Kleisli { runKleisli :: a -> m b }

instance Monad m => Arrow (Kleisli m) where

arr f = Kleisli (return . f)

first (Kleisli f) = Kleisli (\ ~(b,d) -> do c <- f b

return (c,d) )

Laws

∗ See John Hughes: Generalising monads to arrows; Sam Lindley, Philip Wadler, Jeremy Yallop: The arrow calculus; Ross Paterson: Programming with Arrows.

There are quite a few laws that instances of Arrow should

satisfy ∗:

arr id = id

arr (h . g) = arr g >>> arr h

first (arr g) = arr (g *** id)

first (g >>> h) = first g >>> first h

first g >>> arr (id *** h) = arr (id *** h) >>> first g

first g >>> arr fst = arr fst >>> g

first (first g) >>> arr assoc = arr assoc >>> first g

assoc ((x,y),z) = (x,(y,z))

Note that this version of the laws is slightly different than the laws given in the

first two above references, since several of the laws have now been

subsumed by the Category laws (in particular, the requirements that

id is the identity arrow and that (>>>) is associative). The laws

shown here follow those in Paterson's Programming with Arrows, which uses the

Category class.

∗ Unless category-theory-induced insomnolence is your cup of tea.

The reader is advised not to lose too much sleep over the Arrow

laws ∗, since it is not essential to understand them in order to

program with arrows. There are also laws that ArrowChoice,

ArrowApply, and ArrowLoop instances should satisfy; the interested

reader should consult Paterson: Programming with Arrows.

ArrowChoice

Computations built using the Arrow class, like those built using

the Applicative class, are rather inflexible: the structure of the computation

is fixed at the outset, and there is no ability to choose between

alternate execution paths based on intermediate results.

The ArrowChoice class provides exactly such an ability:

class Arrow (~>) => ArrowChoice (~>) where

left :: (b ~> c) -> (Either b d ~> Either c d)