Difference between revisions of "User:Michiexile/MATH198/Lecture 6"

Michiexile (talk | contribs) |

Michiexile (talk | contribs) |

||

| Line 12: | Line 12: | ||

* <math>C</math> has all finite limits. |

* <math>C</math> has all finite limits. |

||

* <math>C</math> has all finite products and all equalizers. |

* <math>C</math> has all finite products and all equalizers. |

||

| − | * <math>C</math> has all pullbacks and a terminal object. |

+ | * <math>C</math> has all pullbacks and a terminal object. |

| + | |||

| + | Also, the following dual statements are equivalent: |

||

* <math>C</math> has all finite colimits. |

* <math>C</math> has all finite colimits. |

||

* <math>C</math> has all finite coproducts and all coequalizers. |

* <math>C</math> has all finite coproducts and all coequalizers. |

||

| Line 24: | Line 26: | ||

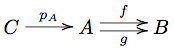

A limit over this diagram is an object <math>C</math> and arrows to all diagram objects. The commutativity conditions for the arrows defined force for us <math>fp_A = p_B = gp_A</math>, and thus, keeping this enforced equation in mind, we can summarize the cone diagram as: |

A limit over this diagram is an object <math>C</math> and arrows to all diagram objects. The commutativity conditions for the arrows defined force for us <math>fp_A = p_B = gp_A</math>, and thus, keeping this enforced equation in mind, we can summarize the cone diagram as: |

||

| + | [[Image:EqualizerCone.png]] |

||

| ⚫ | |||

| + | |||

| ⚫ | |||

As usual, it is helpful to consider the situation in Set to make sense of any categorical definition: and the situation there is helped by the generalized element viewpoint: the limit object <math>C</math> is one representative of a subobject of <math>A</math> that for the case of Set contains all <math>x\in A: f(x) = g(x)</math>. |

As usual, it is helpful to consider the situation in Set to make sense of any categorical definition: and the situation there is helped by the generalized element viewpoint: the limit object <math>C</math> is one representative of a subobject of <math>A</math> that for the case of Set contains all <math>x\in A: f(x) = g(x)</math>. |

||

| Line 30: | Line 34: | ||

Hence the word we use for this construction: the limit of the diagram above is the ''equalizer of <math>f, g</math>''. It captures the idea of a maximal subset unable to distinguish two given functions, and it introduces a categorical way to define things by equations we require them to respect. |

Hence the word we use for this construction: the limit of the diagram above is the ''equalizer of <math>f, g</math>''. It captures the idea of a maximal subset unable to distinguish two given functions, and it introduces a categorical way to define things by equations we require them to respect. |

||

| − | One important special case of the equalizer is the ''kernel'': in a category with a null object, we have a distinguished, unique, member <math>0</math> of any homset given by the compositions of the unique arrows to and from the null object. We define ''the kernel'' <math>Ker(f)</math> of an arrow <math>f</math> to be the equalizer of <math>f, 0</math>. Keeping in mind the arrow-centric view on categories, we tend to |

+ | One important special case of the equalizer is the ''kernel'': in a category with a null object, we have a distinguished, unique, member <math>0</math> of any homset given by the compositions of the unique arrows to and from the null object. We define ''the kernel'' <math>Ker(f)</math> of an arrow <math>f</math> to be the equalizer of <math>f, 0</math>. Keeping in mind the arrow-centric view on categories, we tend to denote the arrow from <math>Ker(f)</math> to the source of <math>f</math> by <math>ker(f)</math>. |

In the category of vector spaces, and linear maps, the map <math>0</math> really is the constant map taking the value <math>0</math> everywhere. And the kernel of a linear map <math>f:U\to V</math> is the equalizer of <math>f,0</math>. Thus it is some vector space <math>W</math> with a map <math>i:W\to U</math> such that <math>fi = 0i = 0</math>, and any other map that fulfills this condition factors through <math>W</math>. Certainly the vector space <math>\{u\in U: f(u)=0\}</math> fulfills the requisite condition, nothing larger will do, since then the map composition wouldn't be 0, and nothing smaller will do, since then the maps factoring this space through the smaller candidate would not be unique. |

In the category of vector spaces, and linear maps, the map <math>0</math> really is the constant map taking the value <math>0</math> everywhere. And the kernel of a linear map <math>f:U\to V</math> is the equalizer of <math>f,0</math>. Thus it is some vector space <math>W</math> with a map <math>i:W\to U</math> such that <math>fi = 0i = 0</math>, and any other map that fulfills this condition factors through <math>W</math>. Certainly the vector space <math>\{u\in U: f(u)=0\}</math> fulfills the requisite condition, nothing larger will do, since then the map composition wouldn't be 0, and nothing smaller will do, since then the maps factoring this space through the smaller candidate would not be unique. |

||

| Line 39: | Line 43: | ||

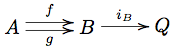

A coequalizer |

A coequalizer |

||

| + | |||

[[Image:CoequalizerCoCone.png]] |

[[Image:CoequalizerCoCone.png]] |

||

| + | |||

has to fulfill that <math>i_Bf = i_A = i_Bg</math>. Thus, writing <math>q=i_B</math>, we get an object with an arrow (actually, an epimorphism out of <math>B</math>) that identifies <math>f</math> and <math>g</math>. Hence, we can think of <math>i_B:B\to Q</math> as catching the notion of inducing equivalence classes from the functions. |

has to fulfill that <math>i_Bf = i_A = i_Bg</math>. Thus, writing <math>q=i_B</math>, we get an object with an arrow (actually, an epimorphism out of <math>B</math>) that identifies <math>f</math> and <math>g</math>. Hence, we can think of <math>i_B:B\to Q</math> as catching the notion of inducing equivalence classes from the functions. |

||

| Line 57: | Line 63: | ||

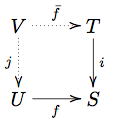

[[Image:PreimageDiagram.png]] |

[[Image:PreimageDiagram.png]] |

||

| − | where <math>i</math> is a monomorphism representing the subobject, we need to find an object <math>V</math> with a monomorphism injecting it into <math>U</math> such that the map <math> |

+ | where <math>i</math> is a monomorphism representing the subobject, we need to find an object <math>V</math> with a monomorphism injecting it into <math>U</math> such that the map <math>\bar fj: V\to S</math> factors through <math>T</math>. Thus we're looking for dotted maps making the diagram commute, in a universal manner. |

The maximality of the subobject means that any other subobject of <math>U</math> that can be factored through <math>T</math> should factor through <math>V</math>. |

The maximality of the subobject means that any other subobject of <math>U</math> that can be factored through <math>T</math> should factor through <math>V</math>. |

||

| Line 77: | Line 83: | ||

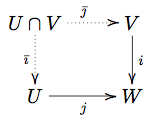

[[Image:PullbackDiagram.png]] |

[[Image:PullbackDiagram.png]] |

||

| − | By the definition of a limit, this means that the pullback is an object <math> |

+ | By the definition of a limit, this means that the pullback is an object <math>P</math> with maps <math>\bar f: P\to B</math>, <math>\bar g: P\to A</math> and <math>f\bar g = g\bar f : P\to C</math>, such that any other such object factors through this. |

For the diagram <math>U\rightarrow^f S \leftarrow^i T</math>, with <math>i:T\to S</math> one representative monomorphism for the subobject, we get precisely the definition above for the inverse image. |

For the diagram <math>U\rightarrow^f S \leftarrow^i T</math>, with <math>i:T\to S</math> one representative monomorphism for the subobject, we get precisely the definition above for the inverse image. |

||

| Line 148: | Line 154: | ||

moreover, the two conditions are related by the formulas |

moreover, the two conditions are related by the formulas |

||

* <math>\phi(g) = U(g) \circ \eta_c</math> |

* <math>\phi(g) = U(g) \circ \eta_c</math> |

||

| − | * <math>\eta_c = \phi(1_{Fc}</math> |

+ | * <math>\eta_c = \phi(1_{Fc})</math> |

'''Proof sketch''' |

'''Proof sketch''' |

||

| Line 177: | Line 183: | ||

moreover, the two conditions are related by the formulas |

moreover, the two conditions are related by the formulas |

||

* <math>\psi(f) = \epsilon_D\circ F(f)</math> |

* <math>\psi(f) = \epsilon_D\circ F(f)</math> |

||

| − | * <math>\epsilon_d = \psi(1_{Ud}</math> |

+ | * <math>\epsilon_d = \psi(1_{Ud})</math> |

where <math>\psi = \phi^{-1}</math>. |

where <math>\psi = \phi^{-1}</math>. |

||

| Line 216: | Line 222: | ||

===Homework=== |

===Homework=== |

||

| − | Complete homework is 6 out of |

+ | Complete homework is 6 out of 11 exercises. |

# Prove that an equalizer is a monomorphism. |

# Prove that an equalizer is a monomorphism. |

||

| Line 229: | Line 235: | ||

## Does it have a left adjoint? What is it? |

## Does it have a left adjoint? What is it? |

||

## Does it have a right adjoint? What is it? |

## Does it have a right adjoint? What is it? |

||

| + | # * Prove the propositions in the text. |

||

| ⚫ | |||

| + | # (worth 4pt) Suppose |

||

| − | |||

| + | :[[Image:AdjointPair.png]] |

||

| ⚫ | |||

:[[Image:AdjointMuAssociative.png]] |

:[[Image:AdjointMuAssociative.png]] |

||

| − | |||

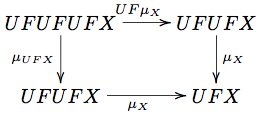

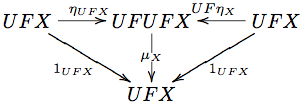

:commutes. Prove that the unit of the adjunction forms a unit for this <math>\mu</math>, in other words, that the diagram |

:commutes. Prove that the unit of the adjunction forms a unit for this <math>\mu</math>, in other words, that the diagram |

||

| − | |||

:[[Image:AdjointMuUnit.png]] |

:[[Image:AdjointMuUnit.png]] |

||

| − | |||

:commutes. |

:commutes. |

||

Revision as of 20:44, 28 October 2009

IMPORTANT NOTE: THESE NOTES ARE STILL UNDER DEVELOPMENT. PLEASE WAIT UNTIL AFTER THE LECTURE WITH HANDING ANYTHING IN, OR TREATING THE NOTES AS READY TO READ.

Useful limits and colimits

With the tools of limits and colimits at hand, we can start using these to introduce more category theoretical constructions - and some of these turn out to correspond to things we've seen in other areas.

Possibly among the most important are the equalizers and coequalizers (with kernel (nullspace) and images as special cases), and the pullbacks and pushouts (with which we can make explicit the idea of inverse images of functions).

One useful theorem to know about is:

Theorem The following are equivalent for a category :

- has all finite limits.

- has all finite products and all equalizers.

- has all pullbacks and a terminal object.

Also, the following dual statements are equivalent:

- has all finite colimits.

- has all finite coproducts and all coequalizers.

- has all pushouts and an initial object.

For this theorem, we can replace finite with any other cardinality in every place it occurs, and we will still get a valid theorem.

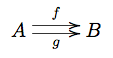

====Equalizer, coequalizer==== Consider the equalizer diagram:

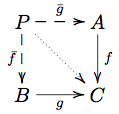

A limit over this diagram is an object and arrows to all diagram objects. The commutativity conditions for the arrows defined force for us , and thus, keeping this enforced equation in mind, we can summarize the cone diagram as:

Now, the limit condition tells us that this is the least restrictive way we can map into with some map such that , in that every other way we could map in that way will factor through this way.

As usual, it is helpful to consider the situation in Set to make sense of any categorical definition: and the situation there is helped by the generalized element viewpoint: the limit object is one representative of a subobject of that for the case of Set contains all .

Hence the word we use for this construction: the limit of the diagram above is the equalizer of . It captures the idea of a maximal subset unable to distinguish two given functions, and it introduces a categorical way to define things by equations we require them to respect.

One important special case of the equalizer is the kernel: in a category with a null object, we have a distinguished, unique, member of any homset given by the compositions of the unique arrows to and from the null object. We define the kernel of an arrow to be the equalizer of . Keeping in mind the arrow-centric view on categories, we tend to denote the arrow from to the source of by .

In the category of vector spaces, and linear maps, the map really is the constant map taking the value everywhere. And the kernel of a linear map is the equalizer of . Thus it is some vector space with a map such that , and any other map that fulfills this condition factors through . Certainly the vector space fulfills the requisite condition, nothing larger will do, since then the map composition wouldn't be 0, and nothing smaller will do, since then the maps factoring this space through the smaller candidate would not be unique.

Hence, just like we might expect.

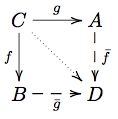

Dually, we get the coequalizer as the colimit of the equalizer diagram.

A coequalizer

has to fulfill that . Thus, writing , we get an object with an arrow (actually, an epimorphism out of ) that identifies and . Hence, we can think of as catching the notion of inducing equivalence classes from the functions.

This becomes clear if we pick out one specific example: let be an equivalence relation, and consider the diagram

where and are given by the projection of the inclusion of the relation into the product onto either factor. Then, the coequalizer of this setup is an object such that whenever , then .

Pullbacks

The preimage of a subset along a function is a maximal subset such that for every .

We recall that subsets are given by (equivalence classes of) monics, and thus we end up being able to frame this in purely categorical terms. Given a diagram like this:

where is a monomorphism representing the subobject, we need to find an object with a monomorphism injecting it into such that the map factors through . Thus we're looking for dotted maps making the diagram commute, in a universal manner.

The maximality of the subobject means that any other subobject of that can be factored through should factor through .

Suppose are subsets of some set . Their intersection is a subset of , a subset of and a subset of , maximal with this property.

Translating into categorical language, we can pick representatives for all subobjects in the definition, we get a diagram with all monomorphisms:

where we need the inclusion of into over is the same as the inclusion over .

Definition A pullback of two maps is the limit of these two maps, thus:

By the definition of a limit, this means that the pullback is an object with maps , and , such that any other such object factors through this.

For the diagram , with one representative monomorphism for the subobject, we get precisely the definition above for the inverse image.

For the diagram with both map monomorphisms representing their subobjects, the pullback is the intersection.

Pushouts

Often, especially in geometry and algebra, we construct new structures by gluing together old structures along substructures. Possibly the most popularly known example is the Möbius band: we take a strip of paper, twist it once and glue the ends together.

Similarily, in algebraic contexts, we can form amalgamated products that do roughly the same.

All these are instances of the dual to the pullback:

Definition A pushout of two maps is the co-limit of these two maps, thus:

Hence, the pushout is an object such that maps to the same place both ways, and so that, contingent on this, it behaves much like a coproduct.

Free and forgetful functors

Recall how we defined a free monoid as all strings of some alphabet, with concatenation of strings the monoidal operation. And recall how we defined the free category on a graph as the category of paths in the graph, with path concatenation as the operation.

The reason we chose the word free to denote both these cases is far from a coincidence: by this point nobody will be surprised to hear that we can unify the idea of generating the most general object of a particular algebraic structure into a single categorical idea.

The idea of the free constructions, classically, is to introduce as few additional relations as possible, while still generating a valid object of the appropriate type, given a set of generators we view as placeholders, as symbols. Having a minimal amount of relations allows us to introduce further relations later, by imposing new equalities by mapping with surjections to other structures.

One of the first observations in each of the cases we can do is that such a map ends up being completely determined by where the generators go - the symbols we use to generate. And since the free structure is made to fulfill the axioms of whatever structure we're working with, these generators combine, even after mapping to some other structure, in a way compatible with all structure.

To make solid categorical sense of this, however, we need to couple the construction of a free algebraic structure from a set (or a graph, or...) with another construction: we can define the forgetful functor from monoids to sets by just picking out the elements of the monoid as a set; and from categories to graph by just picking the underlying graph, and forgetting about the compositions of arrows.

Now we have what we need to pinpoint just what kind of a functor the free widget generated by-construction does. It's a functor , coupled with a forgetful functor such that any map in induces one unique mapping in .

For the case of monoids and sets, this means that if we take our generating set, and map it into the set of elements of another monoid, this generates a unique mapping of the corresponding monoids.

This is all captured by a similar kind of diagrams and uniquely existing maps argument as the previous object or morphism properties were defined with. We'll show the definition for the example of monoids.

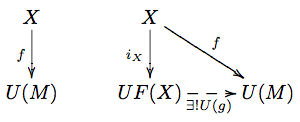

Definition A free monoid on a generating set is a monoid such that there is an inclusion and for every function for some other monoid , there is a unique homomorphism such that , or in other words such that this diagram commutes:

We can construct a map by . The above definition says that this map is an isomorphism.

Adjunctions

Modeling on the way we construct free and forgetful functors, we can form a powerful categorical concept, which ends up generalizing much of what we've already seen - and also leads us on towards monads.

We draw on the definition above of free monoids to give a preliminary definition. This will be replaced later by an equivalent definition that gives more insight.

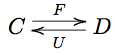

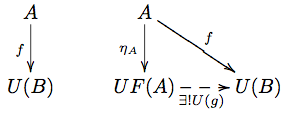

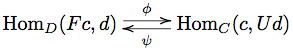

Definition A pair of functors,

is called an adjoint pair or an adjunction, with called the left adjoint and called the right adjoint if there is natural transformation , and for every , there is a unique such that the diagram below commutes.

The natural transformation is called the unit of the adjunction.

This definition, however, has a significant amount of asymmetry: we can start with some and generate a , while there are no immediate guarantees for the other direction. However, there is a proposition we can prove leading us to a more symmetric statement:

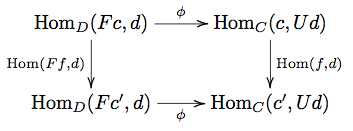

Proposition For categories and functors

the following conditions are equivalent:

- is left adjoint to .

- For any , , there is an isomorphism , natural in both and .

moreover, the two conditions are related by the formulas

Proof sketch For (1 implies 2), the isomorphism is given by the end of the statement, and it is an isomorphism exactly because of the unit property - viz. that every generates a unique .

Naturality follows by building the naturality diagrams

and chasing through with a .

For (2 implies 1), we start out with a natural isomorphism . We find the necessary natural transformation by considering .

QED.

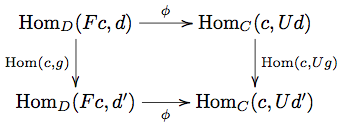

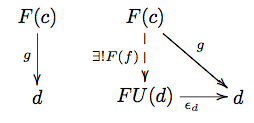

By dualizing the proof, we get the following statement:

Proposition For categories and functors

the following conditions are equivalent:

- For any , , there is an isomorphism , natural in both an

- There is a natural transformation with the property that for any there is a unique such that , as in the diagram

moreover, the two conditions are related by the formulas

where .

Hence, we have an equivalent definition with higher generality, more symmetry and more horsepower, as it were:

Definition An adjunction consists of functors

and a natural isomorphism

The unit and the counit of the adjunction are natural transformations given by:

- .

Some of the examples we have had difficulties fitting into the limits framework show up as adjunctions:

The free and forgetful functors are adjoints; and indeed, a more natural definition of what it means to be free is that it is a left adjoint to some forgetful functor.

Curry and uncurry, in the definition of an exponential object are an adjoint pair. The functor has right adjoint .

Notational aid

One way to write the adjoint is as a bidirectional rewrite rule:

,

where the statement is that the hom sets indicated by the upper and lower arrow, respectively, are transformed into each other by the unit and counit respectively. The left adjoint is the one that has the functor application on the left hand side of this diagram, and the right adjoint is the one with the functor application to the right.

Homework

Complete homework is 6 out of 11 exercises.

- Prove that an equalizer is a monomorphism.

- Prove that a coequalizer is an epimorphism.

- Prove that given any relation , its completion to an equivalence relation is the kernel of the coequalizer of the component maps of the relation

- Prove that if the right arrow in a pullback square is a mono, then so is the left arrow. Thus the intersection as a pullback really is a subobject.

- Prove that if both the arrows in the pullback 'corner' are mono, then the arrows of the pullback cone are all mono.

- What is the pullback in the category of posets?

- What is the pushout in the category of posets?

- Prove that the exponential and the product functors above are adjoints. What are the unit and counit?

- (worth 4pt) Consider the unique functor to the terminal category.

- Does it have a left adjoint? What is it?

- Does it have a right adjoint? What is it?

- * Prove the propositions in the text.

- (worth 4pt) Suppose